Real World Mobile Eye Tracking

Learn how to analyze mobile eye tracking data more easily.

Virtual reality simulations can be used to analzye eye movements more systematically in similar-to-life conditions.

A paper on our algorithms for generating heat maps for 3D models will be presented at the 2016 Symposium on Eye-Tracking Research and Application in Greenville.

A paper on the new version EyeSee3D 2.0 will be presented at the 2016 Symposium on Eye-Tracking Research and Application in Greenville.

Patrick Renner is presenting EyeSee3D as part of the tutorial “Eye-tracking and Visualization” we organized together with Tanja Blascheck, Kuno Kurzhals and Michael Raschke.

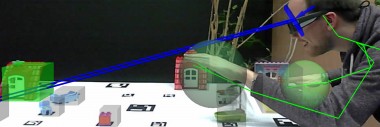

A recent project using EyeSee3D technology to analyze eye-gaze data of two interaction partners was presented at ECEM 2015 in Vienna.

In the project “Modelling Partners” of the Collaborative Research Center 673 on “Situated Communication” at Bielefeld University, gaze data of two interaction partners wearing eye-tracking sytsems was analyzed to collect data on joint attention behavior.

First paper on EyeSee3D presented at the 2014 Symposium on Eye-Tracking Research and Application in Florida.